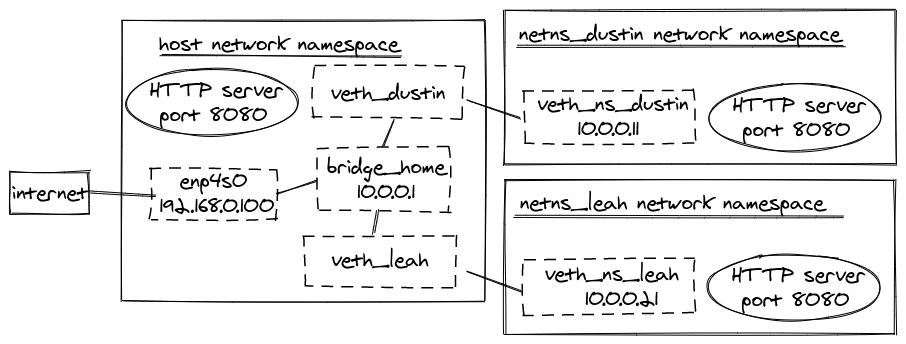

This specification will create a Service which targets TCP port 80 on any Pod with the run: my-nginx label, and expose it on an abstracted Service port (targetPort: is the port the container accepts traffic on, port: is the abstracted Service port, which can be any port other pods use to access the Service). It may look like the following:ĪpiVersion : v1 kind : Service metadata : name : my-nginx labels : run : my-nginx spec : ports : - port : 80 protocol : TCP selector : run : my-nginx $ kubectl apply -f nginx-svc.yaml Let’s create a NodeJS service definition. Like all other Kubernetes objects, a Service can be defined using a YAML or JSON file that contains the necessary definitions (they can also be created using just the command line, but this is not the recommended practice). Pods can be configured to talk to the Service, and know that communication to the Service will be automatically load-balanced out to some pod that is a member of the Service. This address is tied to the lifespan of the Service, and will not change while the Service is alive. When created, each Service is assigned a unique IP address (also called clusterIP).

This leads to a problem: if some set of Pods (call them “backends”) provides functionality to other Pods (call them “frontends”) inside your cluster, how do the frontends find out and keep track of which IP address to connect to, so that the frontend can use the backend part of the workload? Enter ServicesĪ Kubernetes Service is an abstraction which defines a logical set of Pods running somewhere in your cluster, that all provide the same functionality. Each Pod gets its own IP address, however in a Deployment, the set of Pods running in one moment in time could be different from the set of Pods running that application a moment later. If you use a Deployment to run your app, it can create and destroy Pods dynamically.

They are born and when they die, they are not resurrected.

In theory, you could talk to these pods directly, but what happens when a node dies? The pods die with it, and the Deployment will create new ones, with different IPs. Say, you have pods running nginx in a flat, cluster wide, address space.